Navigating Batch Effects in Multi-Platform Methylation Studies: From Detection to Cross-Platform Harmonization

Integrating DNA methylation data from diverse platforms like microarrays, bisulfite sequencing, and nanopore sequencing is essential for large-scale epigenomic studies but introduces significant technical batch effects that can compromise data...

Navigating Batch Effects in Multi-Platform Methylation Studies: From Detection to Cross-Platform Harmonization

Abstract

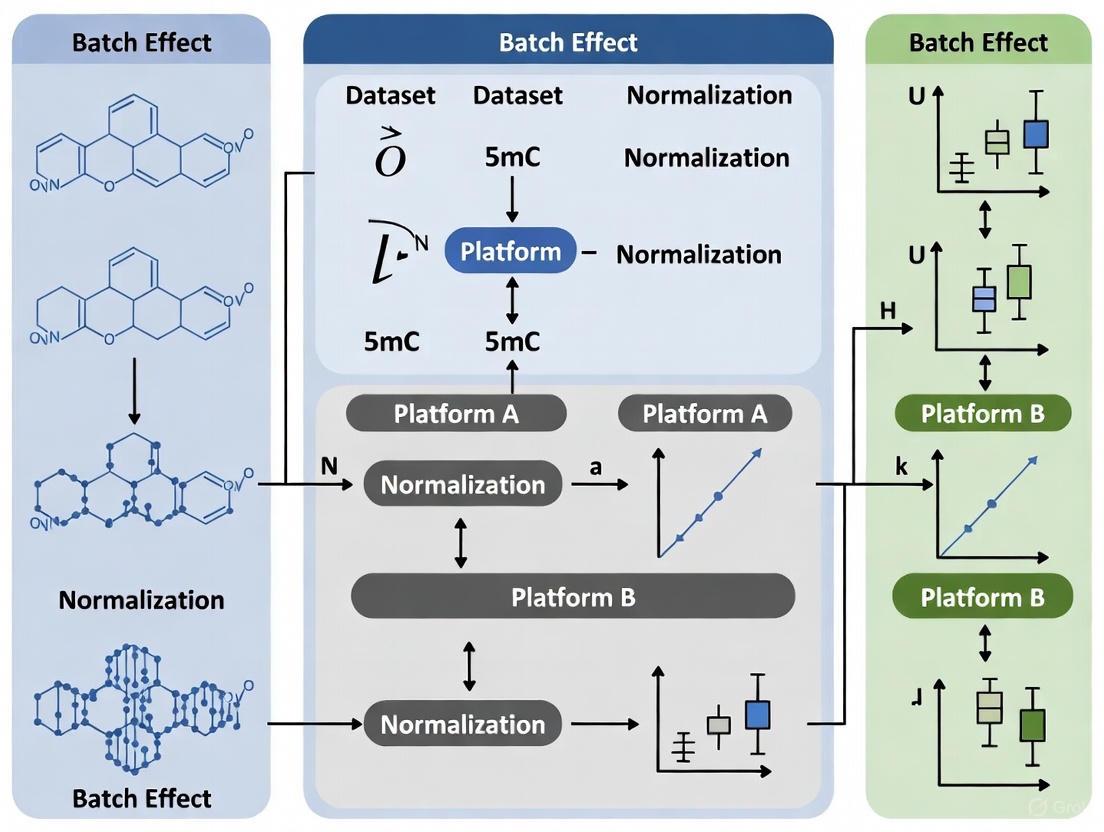

Integrating DNA methylation data from diverse platforms like microarrays, bisulfite sequencing, and nanopore sequencing is essential for large-scale epigenomic studies but introduces significant technical batch effects that can compromise data integrity and biological discovery. This article provides a comprehensive framework for researchers and drug development professionals to address these challenges. We explore the foundational sources of batch effects across major profiling technologies, evaluate established and novel correction methodologies including ComBat variants and machine learning approaches, and present optimization strategies for robust multi-platform analysis. Furthermore, we examine validation techniques and comparative performance of harmonization methods, highlighting emerging solutions for cross-platform classification to enhance reproducibility in clinical and translational research.

Understanding Batch Effect Origins in Diverse Methylation Platforms

Troubleshooting Guides and FAQs

Technical variation arises from multiple sources throughout the experimental workflow. Key sources include:

- Sample processing batches: Samples processed at different times or by different personnel [1]

- Array positional effects: Physical position of samples on the Illumina BeadChip array [2]

- Bisulfite conversion efficiency: Variations in the chemical conversion of unmethylated cytosines [3] [4]

- Platform differences: Technical variability when using different array generations (450K, EPICv1, EPICv2) [5]

How can I identify if my dataset has significant batch effects?

Several assessment methods can reveal batch effects:

- Principal Component Analysis (PCA): Visualize clustering by batch rather than biological group [1]

- Association testing: Calculate proportion of CpGs significantly associated with batch (p < 0.01) [1]

- Technical replicate analysis: Evaluate variation between replicate samples [2]

- Unsupervised hierarchical clustering: Check if samples cluster by technical rather than biological factors [1]

Which batch effect correction method is most effective for DNA methylation data?

The optimal method depends on your data characteristics:

| Method | Best For | Key Considerations |

|---|---|---|

| ComBat-met | DNA methylation β-values specifically | Uses beta regression framework for [0,1]-constrained data [3] |

| ComBat | Known batch effects with normal distribution assumptions | Requires M-value transformation; effective for positional effects [2] |

| Functional Normalization | Leveraging control probes | Removes technical variation using control probe data [5] |

| Empirical Bayes (EB) | Datasets with obvious batch effects | Works well following normalization [1] |

How does array type affect data comparability in longitudinal studies?

Array differences introduce technical variability:

| Array Type | CpG Coverage | Key Considerations for Cross-Platform Studies |

|---|---|---|

| 450K | 485,577 probes | Baseline for many historical datasets [5] |

| EPICv1 | 866,552 probes | 93.5% probe overlap with 450K [5] |

| EPICv2 | 937,690 probes | Additional cancer-informed CpGs; careful probe filtering needed [5] |

Recent studies show that 17.5% of CpGs demonstrate significant array bias, and epigenetic age estimates are more stable when using principal component versions of epigenetic clocks across platforms [5].

Experimental Protocols

Protocol 1: Assessing Batch Effects with Principal Variance Component Analysis (PVCA)

Purpose: Quantify the proportion of variance attributable to batch effects versus biological factors [2].

Methodology:

- Data Preparation: Normalize β-values using your preferred method (e.g., functional normalization)

- Variance Components Analysis: Apply PVCA to partition variance among factors

- Interpretation: Calculate the percentage of variance explained by batch versus biological variables

- Decision Point: If batch explains >10% of variance, correction is recommended

Expected Outcomes: One study found batch effects explained substantial variation across multiple datasets, with 52,988 CpG loci significantly associated with sample positions in the primary dataset [2].

Protocol 2: ComBat-met Batch Effect Correction

Purpose: Remove batch effects while preserving the statistical properties of DNA methylation β-values [3].

Methodology:

- Model Fitting: Fit beta regression models to the data accounting for batch effects

- Parameter Estimation: Calculate batch-free distributions using maximum likelihood estimation

- Quantile Matching: Map quantiles of estimated distributions to batch-free counterparts

- Validation: Verify biological signals are maintained while batch effects are reduced

Implementation:

The Scientist's Toolkit: Research Reagent Solutions

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| Illumina DNA Methylation BeadChips | Genome-wide methylation profiling | Choose appropriate platform (450K/EPICv1/EPICv2) based on study needs [2] [5] |

| Bisulfite Conversion Kits | Convert unmethylated cytosines to uracils | Ensure DNA purity; particulate matter affects conversion efficiency [4] |

| Zymo EZDNA Bisulfite Conversion Kit | Bisulfite treatment of DNA | Follow manufacturer's protocols for different DNA input amounts [5] |

| Qiagen DNeasy DNA Blood & Tissue Kit | DNA extraction from samples | Standardized extraction minimizes technical variation [5] |

| Platinum Taq DNA Polymerase | Amplification of bisulfite-converted DNA | Proof-reading polymerases not recommended for uracil-containing templates [4] |

Workflow Diagrams

Batch Effect Assessment and Correction Workflow

Methylation Data Processing Pipeline

Cross-Platform Methylation Analysis Strategy

FAQ: Understanding Platform-Specific Biases

Q: What are the fundamental differences in how microarrays and sequencing technologies measure DNA methylation?

A: The core difference lies in their detection principles. Microarrays, like the Illumina Infinium MethylationEPIC BeadChip, use hybridization. Fluorescently labeled bisulfite-converted DNA binds to complementary probes on a solid surface, with methylation status (reported as a β-value from 0 to 1) determined by the ratio of fluorescent signals from methylated vs. unmethylated probes [6] [7]. In contrast, sequencing methods like Whole-Genome Bisulfite Sequencing (WGBS) or Enzymatic Methyl-Sequencing (EM-seq) use chemical or enzymatic conversion, followed by high-throughput sequencing to provide a digital count of reads at single-base resolution [8] [6] [7].

Q: My multi-platform study shows inconsistent results for the same samples. Is this due to batch effects or fundamental platform biases?

A: It could be both. True platform-specific biases exist because each technology interrogates DNA differently. For instance, microarrays have predefined genomic coverage, while sequencing can discover novel sites [6]. Separate from this, batch effects are technical variations introduced by factors like different processing dates, reagent lots, or laboratories [3]. Batch effects can occur within a single platform and are compounded when integrating data from different platforms. It is crucial to apply batch-effect correction methods like ComBat-met designed for multi-platform methylation data after accounting for the known biological and technical differences between the platforms [3].

Q: For DNA methylation analysis, which platform is more sensitive for detecting differential methylation in low-input samples?

A: Microarrays are generally robust for low-input DNA, routinely working with 500 ng or less [6]. However, newer sequencing library preparation methods for EM-seq are also advancing and can handle lower input amounts while preserving DNA integrity better than traditional bisulfite sequencing [6]. The choice depends on your need for genome-wide coverage versus the ability to work with degraded samples.

Q: I am observing a high number of sequencing reads that do not perfectly match my reference. Is this a technical artifact?

A: Yes, this is a known technical bias in some NGS platforms. Studies using synthetic RNA samples with known sequences have identified significant "sequence variation" in Illumina sequencing data, where a large proportion of reads contain errors, length variants, or mismatches compared to the original synthetic template [9]. This "cross-sequencing" issue can make it difficult to distinguish between closely related sequences. Pre-processing with quality-aware alignment tools can help, but may reduce sensitivity [9]. This is a platform-specific bias less commonly associated with microarray hybridization.

Troubleshooting Guides

Issue 1: High Discrepancy in Methylation Calls Between Microarray and Sequencing

Symptoms: β-values from microarray data and methylation proportions from sequencing data for the same genomic region and sample show poor correlation.

Diagnosis and Solutions:

- Step 1: Verify Genomic Coordinate Alignment. Ensure you are comparing precisely the same CpG sites. Microarray probes can sometimes cross-hybridize to regions with high sequence similarity, while sequencing reads might misalign in repetitive regions [9] [6].

- Step 2: Check for Probe-Type Bias. On Illumina arrays, two different probe chemistries (Infinium I and II) are used, which can introduce bias. Ensure proper normalization has been applied to the array data [6].

- Step 3: Assess Coverage Depth. For sequencing, low read depth at a CpG site leads to unreliable methylation estimates. Filter out sites with coverage below a minimum threshold (e.g., 10x) [7].

- Step 4: Investigate Regional Context. Biases are more pronounced in specific genomic contexts. Check if discrepancies are concentrated in regions with high GC-content, repetitive elements, or known structural variations, where both technologies can struggle [6].

Issue 2: Severe Batch Effects When Merging Datasets from Different Platforms

Symptoms: Principal Component Analysis (PCA) or other unsupervised clustering methods show samples grouping strongly by technology platform (e.g., all microarray samples cluster together, separate from all sequencing samples), obscuring the biological signal of interest.

Diagnosis and Solutions:

- Step 1: Do NOT Correct by Platform. Treating the platform itself as a "batch" to be corrected can remove genuine biological signals along with technical bias. The platform is a known and wanted technical variable that must be handled differently from unknown, unwanted batch effects [3].

- Step 2: Use Cross-Platform Normalization Methods. Apply batch-effect correction frameworks specifically designed for methylation data that can handle its bounded distribution (β-values between 0-1). The standard ComBat tool assumes a normal distribution and is not ideal.

- Recommended Tool: Use ComBat-met, a beta regression framework that models the specific characteristics of β-values and maps quantiles to a batch-free distribution without assuming normality [3].

- Step 3: Validate with Negative Controls. Use known negative control samples or regions that should not be differentially methylated between platforms to assess the success of the correction. The correlation should improve post-correction.

Issue 3: Poor Concordance in Copy Number Variation (CNV) Calls

Symptoms: CNV assessments for genes like EGFR or CDKN2A/B in gliomas show different results when using FISH, NGS, or DNA Methylation Microarray (DMM) [10].

Diagnosis and Solutions:

- Step 1: Understand Platform Strengths. FISH is targeted and has lower resolution, while NGS and DMM provide genome-wide profiles. DMM infers CNV from methylation array intensity data and shows high concordance with NGS for specific CNV markers [10]. Discordance with FISH is expected in high-grade gliomas with high genomic instability.

- Step 2: Implement an Integrated Diagnostic Approach. Do not rely on a single platform. For critical clinical diagnostics, use a multi-platform strategy where CNV calls from one platform (e.g., NGS) are validated by another (e.g., DMM) [10].

- Step 3: Manually Review Problematic Regions. For cases with known genomic instability or complex rearrangements, manually inspect the raw data (e.g., B-allele frequency and log R ratio for arrays, read depth and paired-end reads for NGS) instead of relying solely on automated calling algorithms.

Experimental Protocols for Bias Assessment

Protocol 1: Cross-Platform Validation Using Orthogonal Methods

Objective: To validate findings from one platform (e.g., microarray) using another technology (e.g., sequencing) or a gold-standard method like pyrosequencing.

Materials:

- High-quality genomic DNA sample(s).

- Microarray platform (e.g., Illumina EPIC v2).

- Sequencing platform (e.g., for WGBS or EM-seq).

- Reagents for bisulfite conversion (e.g., EZ DNA Methylation Kit).

- Key Reagent: Pyrosequencing assay for target regions.

Methodology:

- Split the same DNA sample and process it in parallel for the microarray and sequencing assays, following manufacturers' protocols.

- For both platforms, perform standard data processing and normalization.

- Identify a set of CpG sites common to both platforms.

- For a subset of significantly discordant sites, design and run pyrosequencing assays as an orthogonal validation.

- Calculate correlation coefficients (Pearson or Spearman) between the β-values from the initial platform and the pyrosequencing results, and again with the second platform.

Protocol 2: Evaluating Technical Performance with Synthetic Controls

Objective: To assess the absolute quantification accuracy, sensitivity, and specificity of a platform using synthetic RNA/DNA samples with known concentrations.

Materials:

- Synthetic RNA oligo pools with precisely known concentrations (e.g., 744 oligos mimicking microRNA diversity) [9].

- The platform(s) under investigation (e.g., Microarray and NGS sequencer).

Methodology:

- Create two or more synthetic samples by mixing the oligo pools in different, predefined ratios. This creates known absolute concentrations and expected log2 ratios [9].

- Process these synthetic samples on the microarray and sequencing platforms.

- Data Analysis:

- Calculate the correlation (r) between the measured expression/intensity and the known RNA concentration for absolute quantification.

- Calculate the correlation between the observed log2 ratios and the expected log2 ratios for relative quantification.

- Compare the sensitivity (detection rate at low concentrations) and reproducibility between technical replicates for each platform [9].

Data Presentation: Platform Comparison Tables

Table 1: Technical Comparison of Major DNA Methylation Profiling Methods

| Feature | Illumina Methylation EPIC Array | Whole-Genome Bisulfite Sequencing (WGBS) | Enzymatic Methyl-Sequencing (EM-seq) | Oxford Nanopore (ONT) |

|---|---|---|---|---|

| Resolution | Pre-defined CpG sites (~935,000) | Single-base (theoretical full genome) | Single-base (theoretical full genome) | Single-base (direct detection) |

| DNA Input | ~500 ng [6] | ~1 μg [6] | Lower than WGBS [6] | ~1 μg (8 kb fragments) [6] |

| DNA Degradation | Subject to bisulfite degradation [6] | Subject to bisulfite degradation [6] | Preserves DNA integrity [6] | No conversion needed [7] |

| Key Strengths | Cost-effective, standardized analysis, high throughput [6] [7] | Gold standard for comprehensive coverage [8] | Better coverage uniformity than WGBS, less DNA damage [8] [6] | Long reads, detects modifications directly [6] [7] |

| Key Limitations | Limited to pre-designed probes, cross-hybridization risk [9] [6] | High cost, computational burden, bisulfite-induced bias [6] | Still relies on conversion (enzymatic) | Higher raw error rate [6] |

Table 2: Quantitative Performance Comparison of Microarray and RNA-Seq from a Representative Study [11]

| Performance Metric | Microarray | RNA-Seq |

|---|---|---|

| Genes Detected (after filtering) | 15,828 | 22,323 |

| Differentially Expressed Genes (DEGs) Identified | 427 | 2395 |

| Shared DEGs | 223 (shared between both) | 223 (shared between both) |

| Perturbed Pathways Identified | 47 | 205 |

| Median Pearson Correlation with shared genes | 0.76 | 0.76 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Methylation and Transcriptomics Studies

| Reagent / Kit | Function | Application Notes |

|---|---|---|

| EZ DNA Methylation Kit (Zymo Research) | Bisulfite conversion of unmethylated cytosines to uracils. | Standard for pre-processing DNA for both microarray and bisulfite sequencing methods [6]. |

| NEBNext Ultra II RNA Library Prep Kit (Illumina) | Prepares RNA sequencing libraries for next-generation sequencing. | Used for transcriptome analysis via RNA-Seq [11]. |

| PAXgene Blood RNA Kit | Stabilizes and purifies intracellular RNA from whole blood. | Critical for preserving accurate gene expression profiles from clinical blood samples [11]. |

| GLOBINclear Kit (Ambion) | Depletes globin mRNA from whole blood RNA samples. | Reduces background noise and improves detection of non-globin transcripts in blood samples [11]. |

| Nanobind Tissue Big DNA Kit (Circulomics) | Extracts high-molecular-weight DNA from tissue. | Suitable for long-read sequencing technologies like Nanopore that require long, intact DNA strands [6]. |

Experimental Workflow and Decision Diagrams

Diagram 1: Platform Bias Troubleshooting Flowchart

Diagram 2: ComBat-met Batch Effect Correction Workflow [3]

A technical guide for researchers navigating the impact of probe chemistry on data reliability in methylation studies.

This guide addresses the critical technical differences between Infinium I and Infinium II probe designs on Illumina Methylation BeadChips (e.g., 450K, EPIC). Understanding these differences is essential for effective experimental design, data preprocessing, and accurate interpretation of results, particularly in the context of multi-platform studies where batch effects are a major concern [12].

FAQ: Why Do Probe Design Differences Matter?

Q: What is the fundamental technical difference between Infinium I and II probes?

A: The core difference lies in the number of probes and color channels used to interrogate a single CpG site [13] [14].

- Infinium I Probes use two separate probe sequences (beads)—one for the methylated allele (M) and one for the unmethylated allele (U). Base extension is the same for both, and the color channel (red or green) is determined by the nucleotide adjacent to the target cytosine [12].

- Infinium II Probes use a single probe sequence for both alleles. The methylation state is determined at the single-base extension step, which incorporates a dye-labeled nucleotide. This design confounds the red/green channel signal with the methylation measurement itself; typically, the green channel (Cy3) signal corresponds to methylated bases, and the red channel (Cy5) corresponds to unmethylated bases [12] [14].

Q: How does this design difference impact data quality and susceptibility to batch effects?

A: The Infinium II design, while more economical and allowing for higher density on the array, introduces specific technical vulnerabilities:

- Reduced Dynamic Range: Infinium II probes consistently show a reduced dynamic range of measured methylation values (β-values) compared to Infinium I probes [13] [12]. This is presumed to be because the single bead for both alleles is prone to residual emission from the other dye, compressing the signal [12].

- Dye Bias Susceptibility: Because the methylation measurement is directly tied to the ratio of two different dye signals (Cy3 and Cy5), Infinium II probes are inherently more susceptible to technical artifacts like dye bias and photodegradation [12]. Cy5 is known to be more prone to ozone degradation than Cy3, which can systematically affect Infinium II measurements [12].

- Probe-Type Bias: The two chemistries produce distinct β-value distributions. This is a major source of within-array bias that must be corrected through normalization during preprocessing [15].

The table below summarizes the key comparative characteristics of the two probe types.

| Feature | Infinium I Probes | Infinium II Probes |

|---|---|---|

| Probes per CpG | Two (M & U) [13] [14] | One [13] [14] |

| Color Channel | M and U signals in the same channel [12] | M and U signals in different channels (confounded) [12] |

| Dynamic Range | Wider [13] | Reduced [13] [12] |

| Susceptibility to Dye Bias | Lower | Higher [12] |

| Abundance on EPIC array | ~15% | ~85% [16] |

| Normalization Need | High (to correct for different distributions vs. Type II) [15] | High (to correct for different distributions vs. Type I) [15] |

Troubleshooting Guide: Addressing Probe-Related Issues

Issue 1: High Technical Variance and Unreliable Probes

Problem: Data shows high variability between technical replicates, potentially driven by low-reliability probes.

Solutions:

- Identify Probes with Low Mean Intensity: Probes with low signal intensity (the average of methylated and unmethylated signals) exhibit higher β-value variability between replicates and are more likely to provide unreliable measurements [16] [13]. Mean intensity is negatively correlated with proposed "unreliability scores" [16].

- Use Dynamic Thresholds for Filtering: Instead of relying on a fixed list of "bad" probes, implement a data-driven method that calculates mean intensity and unreliability scores for your specific dataset. Filter out probes that fall below a dynamic threshold for these metrics [16]. An R package is available to facilitate this [16].

- Leverage ICC for Probe Reliability: Assess probe reliability using Intraclass Correlation Coefficients (ICCs) on replicate samples. A significant proportion of probes on the EPIC array show poor reproducibility (ICC < 0.50) [15]. Normalization, particularly with the SeSAMe 2 pipeline, has been shown to dramatically improve ICC estimates [15].

Issue 2: Persistent Batch Effects After Standard Normalization

Problem: Batch effects related to processing day, slide, or array position persist despite standard preprocessing.

Solutions:

- Apply Probe-Type Specific Normalization: Ensure your preprocessing pipeline includes a step specifically designed to correct the different β-value distributions between Infinium I and II probes. Methods like BMIQ (Beta-Mixture Quantile Normalization) are widely used for this purpose [15].

- Filter Known Problematic Probes: Prior to normalization and batch correction, aggressively filter out probes known to be problematic. This includes:

- Cross-reactive probes that map to multiple locations in the genome [13] [12].

- Probes containing SNPs (especially at the targeted CpG site) that can confound methylation measurement with genotype information [13] [12].

- Probes with very high average intensity, as they may artifactually report β-values close to 0.5 [13].

- Use Appropriate Batch Correction Tools: When applying batch correction methods like ComBat, always use M-values for the adjustment, as their unbounded nature is more statistically valid for such procedures. After correction, convert the data back to β-values for interpretation [12] [17]. Newer methods like ComBat-met, which uses a beta regression framework tailored for β-values, may offer improved performance [3].

Issue 3: Integrating Data from Different Array Platforms or Batches

Problem: Combining datasets from 450K and EPIC arrays, or from multiple processing batches, introduces strong technical variation that can obscure biological signals.

Solutions:

- Prioritize Balanced Study Design: The ultimate antidote to confounded batch effects is a balanced design where biological groups are distributed evenly across arrays and processing batches [17]. If this is not possible, extreme caution is required during batch correction.

- Implement Incremental Batch Correction: For longitudinal studies where data is added over time, use an incremental framework like iComBat. This allows new batches to be adjusted to a reference without altering previously corrected data, ensuring consistency across the project timeline [18].

- Leverage Conserved Probes for Cross-Species/Species-Specific Studies: For non-human mammalian studies, the Mammalian Methylation Array uses a design that tolerates cross-species mutations via degenerate bases, facilitating more reliable comparisons across species [19].

Experimental Protocols for Assessing Probe Reliability

Protocol 1: Evaluating Probe Performance Using Technical Replicates

Objective: To identify unreliable CpG probes by assessing their reproducibility across technical replicate samples.

Materials:

- Technical replicate samples (from the same DNA source) [16] [15].

- Standard Illumina Methylation BeadChip processing reagents and equipment [16].

- R/Bioconductor packages (e.g.,

minfi,meffil) [14] [15].

Methodology:

- Profile Technical Replicates: Process technical replicate samples across different arrays or batches to capture technical variance [16].

- Calculate Reliability Metrics:

- Mean Intensity (MI): Compute the average of the methylated and unmethylated signal intensities for each probe in each sample [16].

- Unreliability Score: Simulate the influence of technical noise on β-values using the background intensities of negative control probes to generate a probe-specific unreliability score [16].

- Intraclass Correlation Coefficient (ICC): Calculate ICC for each probe across the technical replicates to quantify reproducibility [15].

- Establish Dynamic Thresholds: Determine optimal thresholds for MI and unreliability scores specific to your dataset. Probes falling below the MI threshold or above the unreliability threshold should be flagged for exclusion [16].

- Validate with Biological Replicates: Use paired longitudinal samples (e.g., blood samples from the same individual taken weeks apart) to distinguish technical variability from true biological intra-individual variation [16].

Protocol 2: Systematic Normalization Method Comparison

Objective: To identify the optimal normalization method for a given dataset that best corrects for probe-type bias and other technical artifacts.

Materials:

- A methylation dataset including technical replicates [15].

- R/Bioconductor packages with multiple normalization methods (e.g.,

minfi,wateRmelon,SeSAMe) [15].

Methodology:

- Apply Multiple Normalizations: Process the raw data using several common normalization methods, such as:

- Evaluate Performance Metrics: For each normalized dataset, calculate:

- Select Best-Performing Method: Choose the normalization method that minimizes technical variance between replicates while preserving expected biological signals. Recent systematic evaluations have found SeSAMe 2 to be a top-performing method, while quantile-based methods often perform poorly [15].

Analytical Workflow for Probe Susceptibility

The following diagram outlines a logical workflow for diagnosing and addressing probe-level susceptibility issues in methylation data analysis.

| Item / Resource | Function / Application |

|---|---|

| R/Bioconductor Packages | Open-source software for comprehensive methylation data analysis (e.g., minfi, ChAMP, SeSAMe, ENmix) [16]. |

| Unreliability Score R Package | Calculates data-driven metrics (Mean Intensity & Unreliability Scores) to flag problematic probes for a given dataset [16]. |

| List of Cross-Reactive Probes | A predefined list of probes that non-specifically bind to multiple genomic locations; used for filtering [13] [15]. |

| List of SNP-Containing Probes | A predefined list of probes where a Single Nucleotide Polymorphism overlaps the probe sequence or target CpG; used for filtering [13] [15]. |

| Technical Replicate Samples | Aliquots from the same DNA source used to assess technical variance and probe reliability [16] [15]. |

| Reference-Based Batch Correction | Methods like ComBat-met that adjust all batches to a designated reference batch, improving data integration [3]. |

Frequently Asked Questions

1. What are biological confounders in DNA methylation studies? Biological confounders are inherent biological variables that can create systematic variations in your data, which may be mistaken for or obscure the biological signal of interest. The two primary types are cellular heterogeneity (the presence of multiple cell types in a sample) and genetic variation (individual genetic differences that influence methylation patterns) [20] [21]. Failure to account for these can lead to false positives or false negatives in differential methylation analysis.

2. How does cellular heterogeneity differ from a technical batch effect? While both introduce unwanted variation, they originate from different sources. Batch effects are technical artifacts arising from experimental procedures, such as differences in reagent lots, sequencing runs, or personnel [22]. Cellular heterogeneity is a biological reality, reflecting the diversity of cell types within a tissue sample [20]. If the composition of cell types differs between your case and control groups, this biological difference can confound the analysis.

3. My study uses whole blood. How critical is it to account for cellular heterogeneity? It is highly critical. Whole blood is a mixture of various cell types (e.g., neutrophils, lymphocytes, monocytes), each with a distinct methylation profile [21]. If your compared groups (e.g., disease vs. healthy) have different underlying cell type compositions, any observed methylation differences are likely confounded by this heterogeneity. Methods to adjust for this include using a reference dataset to estimate cell counts or including cell type composition as a covariate in statistical models.

4. Can genetic variation really impact DNA methylation analysis? Yes, significantly. Genetic variants, such as Single Nucleotide Polymorphisms (SNPs), can create or destroy CpG sites and influence local methylation patterns via mechanisms known as methylation quantitative trait loci (mQTLs) [21]. Probes on microarray platforms like the Illumina EPIC array can also hybridize less efficiently in the presence of a genetic variant, leading to technically biased measurements that are misinterpreted as biological methylation differences.

5. What are the signs that my data may be affected by these confounders?

- Cellular Heterogeneity: Your data shows strong clustering or association with known demographic variables (e.g., age, sex) that are also linked to immune cell composition [20] [21].

- Genetic Variation: You notice that significant hits are enriched near known genetic risk loci for the disease you are studying, suggesting the signal may be genetically driven rather than purely epigenetic [21].

- General Confounding: Uncontrolled confounding often manifests as inflation of test statistics (e.g., a high lambda value in an EWAS) even when no true associations are present [21].

Troubleshooting Guides

Issue 1: Suspected Cellular Heterogeneity Confounding

Detection and Diagnosis:

- Visualization: Perform a Principal Component Analysis (PCA) on your methylation data and color the samples by key demographic variables (age, sex, BMI). Strong clustering by these variables can indicate underlying cellular heterogeneity is a major source of variation [20].

- Association Testing: Statistically test the association between the first few principal components of your methylation data and variables like age and sex. A significant association is a red flag [1].

Solutions and Methodologies:

- Estimate Cell Counts: For blood samples, use established reference-based algorithms (e.g., Houseman's method) to estimate the proportions of specific leukocyte subsets from your methylation data.

- Incorporate as Covariates: Include the estimated cell proportions as covariates in your linear regression model for differential methylation analysis.

- Use a Custom Reference: For tissues other than blood, if a cell-type-specific methylome reference is available, you can adapt reference-based estimation methods.

Table 1: Statistical Power Guidelines for EPIC Array Studies. Adapted from [21].

| Sample Size | Minimum Detectable Effect Size (Δβ) | Use Case Scenario |

|---|---|---|

| ~ 100 samples | ~ 0.10 | Pilot studies, large expected effects |

| ~ 500 samples | ~ 0.04 | Moderately powered EWAS |

| ~ 1000 samples | ~ 0.02 | Well-powered to detect small differences at most sites |

Issue 2: Suspected Genetic Variation Confounding

Detection and Diagnosis:

- Probe Filtering: Prior to analysis, rigorously filter your probe list. Remove probes known to (a) contain SNPs at the CpG site or at the single-base extension site, (b) cross-hybridize to multiple genomic locations, or (c) be located on sex chromosomes if not relevant to the study [21]. This pre-emptive step is crucial.

- Post-hoc Colocalization Analysis: If you identify significant hits, check if they are in linkage disequilibrium with known GWAS hits for your trait of interest using tools like GWAS catalog overlaps.

Solutions and Methodologies:

- Employ Robust Normalization: Use normalization methods that are less sensitive to extreme values caused by genetic artifacts.

- Condition on Genotype: In studies where genetic data is also available, the strongest approach is to include the genotype at the specific SNP as a covariate in the methylation model to isolate the epigenetic effect.

- Apply Appropriate Significance Thresholding: Always use a multiple testing correction threshold that accounts for the number of probes tested. For Illumina EPIC arrays, a family-wise error rate (FWER) significance threshold of P < 9 × 10⁻⁸ is recommended [21].

Table 2: Common Methods for Addressing Biological Confounders.

| Method Category | Example Methods | Brief Description | Best for Addressing |

|---|---|---|---|

| Reference-based Deconvolution | Houseman method, EpiDISH | Estimates cell type proportions from bulk tissue data using a reference methylome. | Cellular Heterogeneity |

| Surrogate Variable Analysis | SVA, RUVm | Identifies unmeasured sources of variation (like unknown confounders) from the data itself. | Unknown Confounders, Cellular Heterogeneity |

| Covariate Adjustment | Linear Model Covariates | Directly includes variables like age, sex, or estimated cell counts in the statistical model. | All Known Confounders |

| Probe Filtering | Custom SNP/Cross-hybridization Lists | Removes technically unreliable probes from the analysis. | Genetic Variation |

The following workflow diagram outlines a systematic approach to diagnosing and correcting for these confounders in your data analysis pipeline.

Experimental Protocol: A Combined Workflow for Confounder Adjustment

This protocol provides a detailed methodology for an EWAS that proactively addresses both cellular heterogeneity and genetic variation, suitable for analysis in R.

Step 1: Preprocessing and Quality Control

- Load your beta-value or idat files using a package like

minfi. - Perform standard QC: remove samples with low signal, high detection P-values, or outlier status.

- Normalize the data using an appropriate method (e.g., Functional normalization, Dasen).

Step 2: Probe Filtering for Genetic Confounders

- Obtain a list of problematic probes (SNP-associated, cross-hybridizing). These are publicly available for Illumina arrays.

- Remove these probes from your dataset. This step can eliminate a significant source of false positives [21].

Step 3: Diagnosing Cellular Heterogeneity

- Perform PCA on the filtered and normalized methylation data.

- Correlate the top principal components with biological and technical variables (age, sex, batch, sample group).

- For blood samples, estimate cell counts using a package like

minfiorEpiDISH.

Step 4: Statistical Modeling for Differential Methylation

- Fit a linear model for each CpG probe. Using R-like notation, a robust model would be:

lm(Methylation ~ Disease_Status + CD8T + CD4T + Neutrophils + Bcell + Mono + Age + Sex + Batch) - Where

Disease_Statusis your variable of interest, and the other terms are confounder covariates. - Use the

limmapackage for improved power and stability in this genome-wide testing context.

Step 5: Interpretation and Validation

- Apply the experiment-wide significance threshold of P < 9 × 10⁻⁸ [21].

- Interpret significant hits in the context of your hypothesis, noting that the model has attempted to isolate the effect of disease from other sources of variation.

The Scientist's Toolkit

Table 3: Essential Reagents and Computational Tools for Managing Biological Confounders.

| Item / Resource | Type | Function / Application |

|---|---|---|

| Illumina EPIC/850k Array | Platform | Genome-wide methylation profiling at >850,000 CpG sites. The primary data generation tool. |

| Reference Methylome Database | Computational | A dataset of cell-type-specific methylation profiles (e.g., for blood cells). Essential for estimating cell proportions from bulk tissue data. |

| Curated Probe Filter List | Computational | A pre-compiled list of probes to exclude due to SNPs or cross-hybridization issues. Critical for mitigating genetic variation confounding [21]. |

| R/Bioconductor Packages | Computational | Software tools like minfi (QC & normalization), limma (differential analysis), and EpiDISH (cell type deconvolution). Form the core of the analysis pipeline. |

| SVA / RUVm Package | Computational | Implements Surrogate Variable Analysis (SVA) or Remove Unwanted Variation (RUV) methods to capture and adjust for unknown sources of confounding [3]. |

Troubleshooting Guides and FAQs

FAQ: Why is detecting batch effects so critical in DNA methylation studies?

Batch effects are technical variations introduced during different experimental runs, by different technicians, or on different platforms. They are not related to the biological question you are studying. If left undetected and uncorrected, these non-biological variations can obscure true biological signals, reduce statistical power, and lead to misleading or irreproducible conclusions. In clinical settings, batch effects have even been known to cause incorrect patient classifications, potentially affecting treatment decisions [23].

FAQ: What are the primary visual signs of batch effects in PCA plots?

In a PCA plot, which reduces high-dimensional data to its principal components, batch effects often manifest as a clear separation of samples by experimental batch rather than by the biological groups you are comparing (e.g., disease vs. control). If samples cluster tightly by their processing date, sequencing lane, or array chip, rather than by phenotype, it is a strong indicator that technical variation is dominating your data [24] [23].

FAQ: We see a batch effect in our hierarchical clustering results. What should we do next?

Observing batches clustering together in a dendrogram confirms the presence of a batch effect. The next step is to apply a statistical batch effect correction method. Popular and effective methods include ComBat and its variants (e.g., ComBat-met for methylation beta-values), which use empirical Bayes frameworks to adjust for batch-specific location and scale parameters. For studies where data is collected incrementally, the newer iComBat method allows for correcting new batches without reprocessing existing data, which is ideal for longitudinal studies [25] [18] [3].

FAQ: Our PCA shows no clear batch separation. Does this mean our data is free of batch effects?

Not necessarily. While a clear batch cluster is a obvious sign, more subtle batch effects can still be present and confound your analysis. These can occur if the batch effect is correlated with a biological variable of interest. It is essential to use statistical tests, such as the Pearson’s Chi-squared test, to formally check for an association between the principal components that explain the most variance in your dataset and your known batch variables. A significant p-value indicates that the major sources of variation in your data are linked to batch, even if the visual separation is not stark [24].

FAQ: Are there specific challenges with batch effects in DNA methylation data from different platforms?

Yes. Integrating data from different platforms, such as Illumina Methylation BeadChips (arrays), whole-genome bisulfite sequencing (WGBS), or enzymatic methylation sequencing (EM-seq), is particularly challenging. Each platform has different technical characteristics and covers a different set of CpG sites. Batch effects arising from platform differences can be severe. The first step is often to harmonize the data, keeping only the CpG sites common to all platforms before applying correction methods designed for the specific data type (e.g., beta regression for array beta-values) [26] [3] [24].

Experimental Protocols for Detection

Protocol 1: Principal Component Analysis (PCA) for Batch Effect Detection

This protocol outlines the steps to perform PCA on DNA methylation data to visually and statistically assess batch effects.

Step 1: Data Preparation and Normalization Begin with a normalized matrix of methylation values. For Illumina BeadChip arrays, this is typically the Beta-value matrix (ranging from 0 to 1). Standard preprocessing includes background correction and dye-bias normalization using packages like

minfiin R [14]. Ensure your sample sheet includes both your biological conditions and technical batch variables (e.g., processing date, chip row).Step 2: Perform PCA Filter for the most variable CpG sites (e.g., the top 32,000 sites by standard deviation) to reduce noise and computational load [24]. Use the

prcomp()function in R on the transposed matrix (so samples are rows and CpGs are columns) to perform PCA.Step 3: Visual Inspection Create a scatter plot of the first principal component (PC1) against the second principal component (PC2). Color the data points by their known batch identifier (e.g., array chip) and, on the same plot, use different shapes to represent the biological groups. Look for clear clustering of points by color, which indicates a dominant batch effect.

Step 4: Statistical Validation To quantify the visual observation, perform a statistical test. Use Pearson’s Chi-squared test to check for an association between the top N principal components (e.g., the first 10 PCs) that capture significant variance and the batch variable. A significant p-value (< 0.05) confirms that the major source of variation is technically driven [24].

Protocol 2: Hierarchical Clustering for Batch Effect Detection

This protocol uses unsupervised clustering to reveal sample relationships driven by technical artifacts.

Step 1: Data Preparation Similar to the PCA protocol, start with a normalized Beta-value matrix. Calculate a distance matrix between all samples. The Euclidean distance is a common and effective metric for this purpose when working with methylation values [24].

Step 2: Construct the Dendrogram Perform hierarchical clustering on the distance matrix using Ward's method (Ward.D2 in R) as the agglomeration rule. This method tends to create compact, spherical clusters and is effective at revealing batch-driven groupings [24]. Plot the resulting dendrogram.

Step 3: Interpret the Clustering Annotate the branches of the dendrogram with colored bars representing the batch and biological group for each sample. If the primary splits in the tree correspond to technical batches rather than biological conditions, it is strong evidence of a pervasive batch effect that must be addressed before any downstream biological analysis.

Table 1: Key Statistical Results from a TEEM-Seq Validation Study Demonstrating Data Concordance [24]

| Analysis Type | Metric | Value | Interpretation |

|---|---|---|---|

| Replicate Concordance | Correlation Coefficient (FFPE) | > 0.98 | Very high technical reproducibility between sample replicates. |

| Tumor Classification | Classifier Prediction Score | > 0.82 | Successful and confident classification of tumors into molecular classes. |

| Sequencing Depth | Minimum Depth for FFPE | 35x | Required depth for reliable prediction scores in FFPE samples. |

Table 2: Performance Comparison of Regional Methylation Summary Methods in Simulation [27]

| Simulation Scenario | Detection Rate (Averaging) | Detection Rate (rPCs) | Improvement with rPCs |

|---|---|---|---|

| 25% of CpGs are DM | 19.1% | 73.1% | +54.0% (absolute) |

| 75% of CpGs are DM | 57.4% | 99.0% | +41.6% (absolute) |

| 1% Methylation Difference | 8.4% | 18.8% | +10.4% (absolute) |

| 9% Methylation Difference | 50.1% | 99.7% | +49.6% (absolute) |

Diagnostic Workflows and Relationships

Batch Effect Diagnostic Workflow

Research Reagent Solutions

Table 3: Essential Materials and Tools for Methylation Analysis and Batch Effect Diagnostics

| Item | Function / Description | Example / Note |

|---|---|---|

| Illumina Methylation BeadChip | A microarray platform for genome-wide methylation profiling. Covers over 850,000 CpG sites. | Infinium MethylationEPIC v1.0 BeadChip is a common platform for EWAS [24] [14]. |

| Enzymatic Methyl-Seq (EM-seq) Kit | A library prep method for methylation sequencing that uses enzymes instead of harsh bisulfite chemicals. | Less DNA fragmentation than bisulfite methods; used in TEEM-seq workflows [24]. |

| Twist Human Methylome Panel | A targeted enrichment panel for sequencing-based methylation studies. Covers ~3.98 million CpG sites. | Used in TEEM-seq for focused, cost-effective profiling [24]. |

| R/Bioconductor Packages | Open-source software for statistical analysis and visualization of methylation data. | Essential packages include minfi for preprocessing, limma for differential analysis, and regionalpcs for advanced summaries [27] [14]. |

| Batch Effect Correction Algorithms | Statistical methods to remove technical variation from data. | ComBat-met (for beta-values), iComBat (for incremental data), and ComBat-ref (for RNA-seq) are advanced methods [25] [18] [3]. |

Batch Effect Correction Strategies: From Traditional to AI-Driven Methods

In high-throughput DNA methylation studies, batch effects are systematic technical variations introduced during sample processing by factors such as different experimental dates, reagent lots, or personnel. These non-biological signals can obscure true biological findings, reduce statistical power, and if confounded with the variable of interest, lead to false positive results and irreproducible conclusions [17] [23]. The empirical Bayes framework ComBat (Combating Batch Effects When Combining Batches of Gene Expression Microarray Data) was developed to address this pervasive issue.

ComBat has become a widely adopted tool for batch effect correction because of its ability to borrow information across features (e.g., genes, CpG sites), making it particularly robust even for studies with small sample sizes per batch. Its core methodology uses an empirical Bayes approach to stabilize the estimates of location (mean) and scale (variance) batch effects, thereby preventing overfitting [3] [17].

However, the direct application of the original ComBat, which assumes normally distributed data, to DNA methylation data is problematic. DNA methylation data consists of β-values (methylation proportions ranging from 0 to 1), whose distribution is naturally bounded and often skewed. While a common workaround involves logit-transforming β-values to M-values for ComBat correction, this does not fully respect the inherent characteristics of proportional data [3]. This limitation spurred the development of methylation-specific variants like ComBat-met and iComBat, which are tailored to the unique properties of epigenetic data and modern research needs, such as longitudinal study designs [3] [18].

Methodological Deep Dive: From ComBat to ComBat-met

Core Empirical Bayes Principles

The foundational ComBat algorithm operates through a two-stage empirical Bayes adjustment:

- Model Fitting: It fits a linear model to the data that includes both biological covariates of interest and the batch factors. For each feature, it estimates batch-specific location (additive) and scale (multiplicative) adjustment parameters.

- Parameter Shrinkage: It then shrinks these batch effect parameters towards the overall mean of all features. This crucial step pools information across features, making the adjustment more robust, especially for small sample sizes and batches with limited data [3] [17].

The ComBat-met Framework: A Beta Regression Model

ComBat-met addresses the key limitation of traditional ComBat by modeling β-values directly using a beta regression framework, which is naturally suited for proportional data bounded between 0 and 1 [3].

The methodology can be broken down into three key steps:

Model Fitting: For each CpG site, a beta regression model is fitted where the β-value is assumed to follow a beta distribution. The model is parameterized in terms of a mean (μ) and a precision (φ). The model structure is:

- ( g(\mu{ij}) = \alpha + Xi^T \beta + \gamma_j )

- ( \log(\phi{ij}) = \eta + Zi^T \delta + \lambdaj ) Here, (g(\cdot)) is a logit link function, (\alpha) is the common cross-batch average, (Xi) are covariate vectors, (\gammaj) is the batch-associated additive effect, (\eta) is the log of the common precision, and (\lambdaj) is the batch effect on precision [3].

Calculating Batch-Free Distributions: Using the maximum likelihood estimates from the fitted model, ComBat-met calculates the parameters of a batch-free distribution for each feature. This represents the expected distribution of the data in the absence of batch effects [3].

Quantile-Matching Adjustment: The adjusted value for each original β-value is computed by mapping its quantile from the estimated batch-affected distribution to the corresponding quantile of the calculated batch-free distribution. This non-parametric step ensures the adjusted data follows the desired batch-free distribution [3].

iComBat: An Incremental Extension

For longitudinal studies or clinical trials where new data batches are acquired over time, the requirement to re-correct the entire dataset whenever a new batch is added is computationally inefficient. iComBat was developed to address this. It is an incremental framework based on ComBat that allows newly added batches to be adjusted to previous data without the need to re-process the entire historical dataset. This preserves the original corrected data and is particularly valuable for long-term epigenetic studies of aging or disease progression [18].

Performance Comparison and Experimental Insights

Simulation-Based Performance

Evaluations using simulated data have demonstrated that ComBat-met, when followed by differential methylation analysis, achieves a superior balance of statistical power and false positive control compared to other methods.

Table 1: Comparative Performance of Batch Correction Methods in Simulated Data [3]

| Method | Core Model Assumption | Key Advantage | Reported Performance |

|---|---|---|---|

| ComBat-met | Beta regression | Models bounded nature of β-values | Superior statistical power while controlling false positive rates |

| M-value ComBat | Gaussian (on logit-transformed data) | Widely used, familiar framework | Improved over naïve application, but suboptimal vs. beta regression |

| Naïve ComBat | Gaussian (on raw β-values) | - | Not recommended; violates core model assumptions |

| One-step approach | Gaussian (in linear model) | Simple implementation | Less powerful than dedicated batch correction methods |

| RUVm | Gaussian (on logit-transformed data) | Uses control features | Performance varies based on control feature selection |

A Critical Caveat: Risk of False Positives

A significant body of research highlights a critical caveat when using ComBat and its variants: the potential to systematically introduce false positive findings under certain conditions. This risk is most acute in unbalanced study designs, where the variable of interest (e.g., disease status) is confounded with batch (e.g., all cases processed on one chip, all controls on another) [28] [17].

One simulation study demonstrated that applying ComBat to randomly generated data with no true biological signal produced alarming numbers of false positives after correction, particularly when correcting for multiple batch factors (e.g., chip and row). This effect was exacerbated by smaller sample sizes but was not entirely eliminated even in larger samples [28]. These findings underscore that a balanced study design, where samples from different biological groups are distributed evenly across technical batches, remains the most effective first line of defense against batch effects [17].

Table 2: Key Research Reagent Solutions for DNA Methylation Analysis

| Item / Resource | Function / Description | Relevance to ComBat Workflows |

|---|---|---|

| Bisulfite Conversion Kits | Chemically converts unmethylated cytosines to uracils, preserving methylation marks for PCR-based analysis. | A key source of batch effects; conversion efficiency variations across batches must be corrected [3] [29]. |

| Infinium Methylation BeadChips | Microarray platforms (e.g., 450K, EPIC) for genome-wide methylation profiling at specific CpG sites. | The primary data source for ComBat corrections; effects from chip, row, and sample plate are common targets [28] [17]. |

| Reference Methylated/Unmethylated DNA | Artificially prepared standards with known methylation status. | Used to create standard curves for absolute quantification (e.g., in MethyLight) and can help monitor technical performance [29]. |

The sva R Package |

Contains the ComBat function for applying the original empirical Bayes correction. |

The standard implementation for correcting M-value transformed methylation data [28]. |

The ChAMP R Pipeline |

A comprehensive analysis pipeline for methylation BeadChip data that integrates ComBat. | Automates many preprocessing steps; users must carefully inspect its application of ComBat to avoid false positives [28]. |

Troubleshooting Guides and FAQs

FAQ 1: My analysis pipeline produced thousands of significant CpG sites after using ComBat, but none before. What is happening?

This is a classic symptom of the false positive induction problem associated with ComBat, often stemming from an unbalanced study design [17].

- Problem Diagnosis: If your biological groups are perfectly or highly confounded with batch (e.g., all Group A samples were run on Chip 1, all Group B on Chip 2), ComBat may over-correct the data, artificially creating group differences that are not biologically real. This is especially likely in pilot studies with small sample sizes [28] [17].

- Solution Pathway:

- Inspect Your Design: Create a table cross-tabulating your biological groups against technical batches (chips, rows, processing dates). Look for perfect confounders.

- Re-run with a Balanced Subset: If possible, re-process a subset of your samples using a balanced design across batches. This is the most robust solution.

- Use a Reference Batch: If re-processing is impossible, consider using ComBat's reference batch option, where all batches are adjusted to the parameters of a single, designated batch [3].

- Leverage Control Features: If available, use methods like RUVm that leverage control features (e.g., invariant CpGs) to estimate unwanted variation, which can be less prone to this issue [3].

FAQ 2: When should I use ComBat-met over the standard ComBat function for my methylation data?

The choice hinges on the data format and your focus on statistical rigor versus convenience.

- Use ComBat-met when:

- You are working directly with β-values and wish to model their inherent distribution properly.

- Your primary goal is to maximize statistical power for differential methylation analysis while rigorously controlling false positives, as simulations support its superior performance [3].

- You require the option for reference-based adjustment.

- Use Standard ComBat (on M-values) when:

- You are following an established pipeline (e.g., the standard

ChAMPpipeline) that operates on M-values. - Computational efficiency is a major concern and your study design is well-balanced.

- ComBat-met is not available or practical for your workflow.

- You are following an established pipeline (e.g., the standard

FAQ 3: How can I handle new batches of data without re-processing my entire existing dataset?

This is a common challenge in longitudinal studies. The recommended solution is to use an incremental batch correction method like iComBat [18].

- Standard Workflow Problem: Traditionally, adding a new batch (e.g., a new time point in a clinical trial) requires combining the new raw data with all previous raw data and running ComBat on the entire dataset again. This changes the previously corrected values, causing inconsistency.

- iComBat Solution: iComBat allows you to correct the new batch of data by aligning it to the already-corrected parameters of the existing dataset. This preserves the original corrected data and ensures consistency across the entire study timeline without the need for full reprocessing [18].

FAQ 4: What are the best practices for diagnosing batch effects before and after correction?

A robust diagnostic approach relies on visualization and statistical testing.

- Before Correction:

- Principal Component Analysis (PCA): Create a PCA plot colored by batch and by biological group. If samples cluster strongly by batch, a batch effect is present. If the batch and group variables are confounded, this signals danger [17].

- Association Testing: Statistically test the association between top principal components and both technical (batch, chip, row) and biological variables. Significant associations with technical variables indicate batch effects [17].

- After Correction:

- Repeat PCA: Generate a new PCA plot after correction. Successful correction is indicated by the loss of batch-related clustering, while biological group differences should remain or become more apparent.

- Monitor p-value Distributions: Be wary of a dramatic inflation in the number of significant findings after correction that was not present before, as this can indicate over-correction and false positive induction [28].

Welcome to the iComBat Technical Support Center

This support portal is designed for researchers, scientists, and drug development professionals working with DNA methylation data in longitudinal studies. Below you will find comprehensive troubleshooting guides, FAQs, and detailed methodologies to address common challenges when implementing iComBat for batch effect correction in multi-platform methylation studies.

Understanding iComBat: Core Concepts

What is iComBat and how does it differ from standard ComBat?

iComBat is an incremental framework for batch effect correction in DNA methylation array data, specifically designed for longitudinal studies where new batches are continuously added over time. Unlike conventional ComBat, which requires simultaneous correction of all samples, iComBat allows adjustment of newly added data without reprocessing previously corrected data, maintaining consistency across the entire dataset [25] [18].

What specific problem does iComBat solve in longitudinal methylation studies?

In long-term studies involving repeated DNA methylation measurements, traditional batch correction methods face significant limitations. When new data batches are added and corrected alongside existing data, the correction parameters change, potentially altering previously corrected data and complicating longitudinal interpretation. iComBat addresses this by providing a stable framework where new batches can be integrated without modifying already-corrected historical data [25] [30].

How does the incremental correction capability of iComBat benefit clinical trials?

iComBat is particularly valuable for clinical trials of anti-aging interventions based on DNA methylation or epigenetic clocks, where repeated measurements are taken over extended periods. It enables consistent evaluation of intervention effects across timepoints without the need for complete reprocessing with each new data collection wave, thus enhancing result reliability and interpretation [18].

Technical Specifications & System Requirements

What are the mathematical foundations of iComBat?

iComBat extends the ComBat methodology, which employs a location/scale adjustment model with empirical Bayes estimation. The model accounts for both additive and multiplicative batch effects:

Model Formulation: The basic model for M-values is:

Yijg = αg + Xij⊤βg + γig + δigεijgwhere γig and δig represent additive and multiplicative batch effects respectively [25].Empirical Bayes Framework: The method borrows information across methylation sites within each batch using a Bayesian hierarchical model, providing stable performance even with small sample sizes [25].

What data formats and preprocessing steps does iComBat require?

iComBat is designed for DNA methylation array data and utilizes either Beta-values or M-values:

- Beta-values: Represent methylation proportions ranging from 0 (completely unmethylated) to 1 (completely methylated)

- M-values: Logit-transformed Beta-values providing better statistical properties for analysis [25]

The method assumes data has undergone standard preprocessing specific to your methylation platform (e.g., background correction, normalization) before batch effect correction.

Troubleshooting Common Implementation Issues

Problem: Inconsistent results when adding new batches

Solution:

- Ensure the reference batch parameters are properly saved and loaded when processing new batches

- Verify that covariate information for new batches follows the same structure and coding as previous batches

- Check that the number of methylation sites matches exactly between old and new datasets

Problem: Excessive computation time with large datasets

Solution:

- Utilize the parallel processing capabilities implemented in iComBat

- Consider processing chromosomes separately for genome-wide data

- Ensure sufficient memory allocation for large methylation datasets

Problem: Batch effects persist after correction

Solution:

- Verify that all technical batches are properly documented in the batch covariate

- Check for confounding between biological conditions and batches

- Consider including additional relevant covariates in the model specification

- Validate correction using positive control samples if available

Frequently Asked Questions (FAQs)

Q: Can iComBat handle very small batch sizes (e.g., n=1-3 samples per batch)? A: Yes, iComBat inherits the robustness of traditional ComBat for small sample sizes within batches by borrowing information across methylation sites through its empirical Bayes framework [18].

Q: How does iComBat perform with different methylation measurement technologies? A: While initially validated for microarray data, the methodological framework can potentially be adapted for bisulfite sequencing, enzymatic conversion techniques, and nanopore sequencing data, though platform-specific characteristics should be considered [3].

Q: Is it possible to use iComBat for cross-platform methylation data integration? A: The incremental framework is particularly suited for this application, as new platforms can be treated as additional batches. However, careful validation is recommended using overlapping samples or positive controls to ensure biological signals are preserved [25] [3].

Q: What quality control measures should accompany iComBat implementation? A: We recommend:

- Visual assessment of data before and after correction using PCA

- Monitoring of variance stabilization across batches

- Validation using control samples when available

- Assessment of biological signal preservation through known biomarkers [31]

Experimental Protocols & Workflows

Standard iComBat Implementation Workflow:

Detailed Protocol for Initial iComBat Implementation:

Data Preparation:

- Compile Beta-values or M-values from all available batches

- Create comprehensive batch annotation file specifying batch membership for each sample

- Prepare covariate matrix including biological variables of interest

Initial Model Fitting:

- Estimate global parameters (αg, βg, σg) for each methylation site

- Standardize observed data using these parameter estimates

- Estimate batch effect parameters using empirical Bayes framework

- Apply location/scale adjustment to remove batch effects

Parameter Storage:

- Save all model parameters, hyperparameters, and reference distributions

- Document preprocessing steps and normalization parameters

- Retain covariate model specifications for consistent future application

Protocol for Adding New Batches:

New Data Quality Control:

- Perform standard quality checks on new methylation data

- Ensure compatibility with previously processed data (same probe sets, similar distributions)

Incremental Correction:

- Load previously saved model parameters and reference distributions

- Apply correction to new batches using stored parameters without modifying original data

- Integrate corrected new data with previously corrected datasets

Validation:

- Assess integration quality using visualization methods (PCA, UMAP)

- Verify preservation of biological signals using control features

- Document any deviations or special handling requirements

Comparative Methodologies

Table 1: Comparison of Batch Effect Correction Methods for DNA Methylation Data

| Method | Primary Approach | Incremental Capability | Optimal Use Case |

|---|---|---|---|

| iComBat | Location/scale adjustment with empirical Bayes | Yes | Longitudinal studies with sequential data collection |

| Standard ComBat | Location/scale adjustment with empirical Bayes | No | Cross-sectional studies with complete data |

| ComBat-met | Beta regression framework | No | Methylation data with strong beta distribution characteristics |

| SVA/RUV | Latent factor estimation | Limited | Studies with unknown sources of variation |

| Quantile Normalization | Distribution alignment | No | Technical replication studies |

Table 2: Key Parameters in iComBat Empirical Bayes Estimation

| Parameter | Symbol | Estimation Method | Role in Correction |

|---|---|---|---|

| Additive batch effect | γig | Empirical Bayes | Corrects mean shifts between batches |

| Multiplicative batch effect | δig | Empirical Bayes | Corrects variance differences between batches |

| Cross-batch average | αg | Method of moments | Establishes reference level for correction |

| Regression coefficients | βg | Ordinary least squares | Preserves biological signal during correction |

| Hyperparameters | γi, τi², ζi, θi | Method of moments | Enables information sharing across features |

Research Reagent Solutions

Table 3: Essential Materials for iComBat Implementation in Methylation Studies

| Reagent/Resource | Function | Implementation Notes |

|---|---|---|

| Reference control samples | Batch effect monitoring | Include in each batch to track technical variation |

| DNA methylation reference standards | Quality control | Commercial standards for platform performance validation |

| Bridging samples | Longitudinal consistency | Aliquots from same source processed across multiple batches |

| Epigenetic control materials | Biological validation | Verify preservation of known methylation patterns post-correction |

| iComBat R package | Primary analysis tool | Available through scientific repositories |

| Parallel computing resources | Computational efficiency | Essential for large-scale epigenome-wide analyses |

Advanced Technical Diagrams

For additional technical support or specific implementation challenges not addressed in this guide, please consult the primary iComBat literature [25] [18] or statistical software documentation. Remember that proper experimental design, including randomized processing of samples across batches and inclusion of reference samples, significantly enhances the performance of any batch correction method, including iComBat [31].

Batch effects are technical variations introduced during high-throughput experiments due to differences in experimental conditions, reagent lots, processing times, or laboratory personnel [23]. In DNA methylation studies, these artifacts are particularly problematic as they can obscure true biological signals, reduce statistical power, and potentially lead to incorrect conclusions in downstream analyses [3] [17]. The profound negative impact of batch effects includes increased variability, decreased power to detect real biological signals, and in severe cases, retracted scientific publications when key results cannot be reproduced due to technical artifacts [23].

DNA methylation data presents unique challenges for batch correction as it consists of β-values representing methylation percentages constrained between 0 and 1 [3]. Traditional batch correction methods like ComBat and ComBat-seq, while successful for microarray and RNA-seq data respectively, assume normally distributed or count-based data and are suboptimal for proportion-based methylation values [3]. The distribution of β-values often exhibits skewness and over-dispersion, violating the assumptions of these general-purpose methods [3].

ComBat-met represents a specialized solution to this problem—a beta regression framework specifically designed to adjust batch effects in DNA methylation data while respecting the unique properties of β-values [3] [32]. By employing a beta regression model to estimate batch-free distributions and mapping quantiles of the estimated distributions to their batch-free counterparts, ComBat-met effectively removes technical variations while preserving biological signals of interest [3].

Frequently Asked Questions (FAQs)

Q1: What distinguishes ComBat-met from other batch effect correction methods for methylation data?

ComBat-met fundamentally differs from other methods through its use of beta regression specifically designed for proportion-based β-values. Unlike M-value ComBat which requires logit transformation of β-values to assume normality, or methods like SVA and RUVm that also operate on transformed data, ComBat-met directly models the bounded nature of β-values using beta distribution [3] [32]. This approach better captures the inherent characteristics of DNA methylation data, including potential skewness and over-dispersion [3].

Q2: In what scenarios would ComBat-met be particularly advantageous over other methods?

ComBat-met provides particular advantages in:

- Studies with severe batch effects where normalization alone is insufficient [33]

- Datasets with明显的不平衡分布β-values

- Multi-platform methylation studies integrating data from different technologies

- Situations where preserving true biological signals is critical

- Analyses requiring high statistical power for differential methylation detection [3]

Benchmarking analyses demonstrate that ComBat-met followed by differential methylation analysis achieves superior statistical power compared to traditional approaches while correctly controlling Type I error rates in nearly all cases [3].

Q3: What are the common pitfalls when applying ComBat-met, and how can they be avoided?

A critical pitfall involves applying batch correction to studies with unbalanced designs where biological variables of interest are confounded with batch variables [17]. This can introduce false biological signal rather than remove technical noise. One documented case showed that applying ComBat to an unbalanced study design resulted in 9,612 significant DNA methylation differences despite none being present prior to correction [17].

Prevention strategies include:

- Implementing stratified randomization during study design to distribute biological groups equally across batches

- Thoroughly testing for batch effects using PCA before and after correction

- Maintaining communication between laboratory technicians and data analysts to understand potential confounding factors [17]

Q4: How computationally intensive is ComBat-met, and what optimization options are available?

While beta regression models can be computationally demanding, especially with large datasets [34], ComBat-met implements parallelization using the parLapply() function from the parallel R package to improve computational efficiency [3]. The model fitting is highly parallelizable as it is applied independently to each feature, enabling concurrent processing across multiple threads [3]. For extremely large datasets, the developers also provide an optional empirical Bayes shrinkage method, though the standard approach without shrinkage is generally recommended [3].

Performance Comparison of Batch Effect Correction Methods

Table 1: Comparative performance of batch effect correction methods based on simulation studies

| Method | Underlying Approach | Data Transformation | True Positive Rate | False Positive Rate | Key Strengths |

|---|---|---|---|---|---|

| ComBat-met | Beta regression | Direct modeling of β-values | Highest | Properly controlled | Specifically designed for β-value characteristics |

| M-value ComBat | Empirical Bayes | Logit transformation to M-values | Moderate | Properly controlled | Established method, widely used |

| SVA | Surrogate variable analysis | Logit transformation to M-values | Moderate | Properly controlled | Does not require predefined batch information |

| Including Batch as Covariate | Linear modeling | Logit transformation to M-values | Lower | Properly controlled | Simple implementation |

| BEclear | Latent factor models | Direct modeling of β-values | Moderate | Properly controlled | Specifically for methylation data |

| RUVm | Remove unwanted variation | Logit transformation to M-values | Moderate | Properly controlled | Uses control features |

Table 2: Percentage of variation explained by batch effects in TCGA data after different correction methods

| Method | Normal Samples | Tumor Samples | Interpretation |

|---|---|---|---|

| Uncorrected Data | Highest percentage | Highest percentage | Batch effects dominate biological signal |

| M-value ComBat | Moderate percentage | Moderate percentage | Substantial batch effects remain |

| SVA | Moderate percentage | Moderate percentage | Substantial batch effects remain |

| BEclear | Low percentage | Low percentage | Effective batch effect removal |

| RUVm | Low percentage | Low percentage | Effective batch effect removal |

| ComBat-met | Lowest percentage | Lowest percentage | Most effective batch effect removal |

Step-by-Step Experimental Protocols

Protocol 1: Basic ComBat-met Implementation for DNA Methylation Data

Purpose: Remove batch effects from DNA methylation β-values while preserving biological signals.

Materials and Reagents:

- DNA methylation dataset (β-values matrix)

- R statistical environment (version 4.0 or higher)

- ComBat-met R package (available from GitHub: JmWangBio/ComBatMet)

Procedure:

- Data Preparation: Format your DNA methylation data as a matrix where rows represent CpG sites/features and columns represent samples.

- Batch Information: Create a batch vector indicating the batch membership for each sample.

- Biological Conditions: Specify biological groups if available.

- Execute ComBat-met:

- Quality Assessment: Perform PCA and visualize data before and after correction to assess batch effect removal [32].

Troubleshooting Tips:

- If convergence issues occur, verify that batch groups have sufficient sample size

- For large datasets, utilize parallelization to improve computational efficiency

- Always compare pre- and post-correction PCA plots to ensure biological signals are preserved

Protocol 2: Reference-Based Batch Correction

Purpose: Adjust all batches to align with a specific reference batch.

Procedure:

- Identify Reference Batch: Select a batch with high data quality as reference.

- Execute Reference-Based Correction:

- Validation: Compare distributions of β-values across batches before and after correction.

Protocol 3: Comprehensive Performance Validation